Comments are closed.

Organic User Interfaces: Designing Computers in Any Way, Shape or Form

by David Holman and Roel Vertegaal

Introduction

Today’s computers can process information at incredible speeds, and have the flexibility to store and display data in many different forms. But when we compare the things we can do with the actual shape of these computers with the things we can do with that of other real-world tools, it seems like a lot is lost. For example, a simple piece of paper, while holding graphical or written information, can be folded into most any shape, wrapped around products, or torn into bits and recycled entirely. Try doing that with your Blackberry.

Figure 1. FEEK pebble lights by Karim Rashid [7].

The chief reason for the limitations of today’s computer shape is the rigid planar structure of its LCD screen. The requirement to fit and protect the LCD, keyboard and electronics causes the laptop to be rigid too. In this article, we argue that this planar rigidity in interface design generally limits the usability of our computers, in terms of their possible affordances.

A world of design around us is filled with “blobjects” [7]: tools with curved forms. Organic shapes are employed by the likes of Karim Rashid and Frank Gehry, who aptly apply materials like rubber, plastics, and even curved sheet metal in their designs. The first property that appears missing in computer displays, then, is the ability to have any organic shape, e.g., curved like the light fixtures in Figure 1. There are clear advantages to the use of such displays, e.g., when working with curved data sets like 3D models or geographical information. Another missing property is deformability. While fashion designers like Yves Saint Laurent take deformability for granted, it is not at all common in human-computer interactions. Yet deformability eases many real-world tasks, like storing things, or reading this magazine article, for example. The page flip is, in fact, a wonderfully effective way of navigating documents. Its affordance and ability to open a document at a random location is not easily mirrored by a mouse click. Deformability also allows tools to adapt their functionality to different contexts: a newspaper can serve information equally well as fish. Clearly, this kind of shape-shifting flexibility is not found in your average e-book reader.

But might it one day be? New materials, such as E-Ink and Organic Light Emitting Diode (OLED) displays by Polymer Vision and Sony not only mimick the high contrast but also the deformability of paper, potentially making Flatland interfaces a thing of the past. Interaction designers and researchers around the world are starting to work alternate, virtually analog, degrees of input. This design stream aims to develop computers that can take on any shape or form: from pop can to Lumalive jacket.

Organic Design: Natural Morphologies as Inspiration for Industrial Design

With displays in any form will come a wealth of interactive blobjects that literally shape their own functionality. E-book readers that page down upon a flick of the computing substrate. Pop cans with browsers displaying RSS feeds and movie trailers. When pondering the design space of such future blobject computers, the anatomies and morphologies of biological organisms form an interesting source of inspiration. Natural organisms almost exclusively rely on flexible materials and non-planar shapes. E.g., the leafs of plants form resilient solar panels that bend rather than break when challenged. They are not just flexible to adapt to their environment, they also grow and adjust shape to maximize solar efficiency. Computers may one day do just that. Haeckel’s Art Forms in Nature [1] was one of the first catalogues to celebrate organic morphologies. The book, which came out in 1904, was an instant hit with designers protesting modern industrialist art. Art Nouveau designer Constant Roux even used one of Haeckel’s plates on invertibrate morphologies as an inspiration for a chandelier (see Figure 2a).

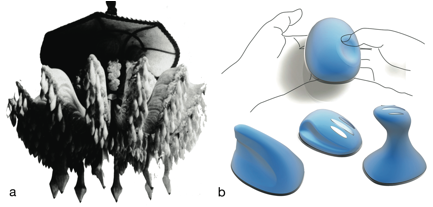

Figure 2a) Chandelier with jelly morphology (C. Roux, circa 1907)

b) Concept moldable mouse with jelly anatomy (Lite-On, 2007)

Organic Architecture: Balancing Industrial with Natural Design

Haeckel’s radiolarians also inspired Art Nouveau architect René Binet’s entrance gate to the 1900 Paris World Expo. But within 10 years, the forces of industrialism would supersede Art Nouveau with their engineering aesthetics. Only in 1939 would American architect Frank Lloyd Wright challenge the Modernist perspective, coining the term Organic Architecture [3] to capture a new philosophy promoting a better balance between human and natural design. His most famous work, Fallingwater in Pennsylvania, still stands as one of the great triumphs of American 20th Century design. With Fallingwater, Lloyd Wright did not intend to copy nature, like his Art Nouveau predecessors. Instead, he created a perfect harmony between the aesthetics of nature and those of modern architecture. Its concrete cantilevers, suspended over the ever-changing flows and rocky outcroppings of the waterfall over which they were built, allowed Wright to literally balance the planar geometries of modernity with the irregular flows of natural design. Having resided in Tokyo, Lloyd Wright’s aesthetic was perhaps inspired by Japanese design philosphies like wabi-sabi, which emphasize natural imperfection and impermanence over Western controlled planar perfectionism.

Tangible and Ubiquitous: Embedding Computers in the Natural World

Indeed, what might computers look like if they were designed with a little more wabi-sabi? More curved, like a piece of earthenware, more flexible, like a sheet of Japanese Washi rice paper and more delicate, like hand-made knitware? Perhaps a good start would be something like PingPongPlus, one of a string of Tangible User Interfaces designed by professor Hiroshi Ishii’s research group at the MIT Media Lab in the mid-nineties. Professor Ishii was amongst the first scientists to design computers that were completely integrated into the user’s natural ecology. His goal was to reduce the computer’s heavy demands on limited-capacity focal attention of users, in favour of faster, high-capacity, peripheral channels of perception.

PingPongPlus (1997) was a table tennis table featuring a video projection of water, along with a school of fish. Whenever the ping-pong ball hit the table, its position would be sensed using microphones. A hit caused the water projected on the table surface to ripple on the spot, and virtual fish to scatter. The combined use of projection on a planar surface, with natural objects tracked as input, allowed tangibles to seemlessly integrate computer elements in a physical game, without affecting its speed or physicality. Tangibles allowed for a tighter coupling between input and output, or, as Professor Ishii more aptly put it: between bits and atoms. However, tangibles lacked the ability to display directly onto most input objects (such as the ball), as rigid displays are difficult to integrate into non-planar objects. They also lacked the ability to track multitouch coordinates on the surface of such objects. And because their shape was not actuated, consistancy between bits and atoms could not always be maintained. As a consequence, tangible designs focused largely on the use of objects as devices for input.

Organic User Interfaces: Designing computers in any way shape or form

Some key developments in computing are now changing this equation. First, advances in flexible input technologies, like Jun Rekimoto’s capacitive SmartSkin, now allow for any surface to sense two-handed, multifinger touch. Jeff Han’s computer vision multi-touch displays changed the way designers think about the connection between input and output. Tovi Grossman took input into a different dimension altogether. His use of ShapeTape, optical fibres that sense bending, eased three-dimensional drawing through direct representation of shape. Something is different about input today, with Nike marketing shoes with accelerometers that sense the pace for iPod beats. In Italy, Danilo De Rossi is sewing piezoelectric sensors into clothes that monitor vital functions, and keep their wearer healthy. And in 2007, Lite-On designers won the red dot award for their concept jelly input blobjects that can be moulded to fit the hand (Figure 2b).

The second development is that of so-called electrophoretic ink (e-ink for short) at MIT. E-ink, now a Massachusetts-based company, designs displays that behave much like printed paper. E-ink displays reflect light directly from their environment, and as such are much more energy efficient than LCDs. E-ink allowed companies such as Philips to start pondering the design of flexible polymer display substrates. The Philips spin-off Polymer Vision has demonstrated Readius: the first smartphone with a foldable electrophoretic display. Much like a paper scroll, its display can be rolled up into the body of the phone. Last February, Sony unveiled their first full color flexible Organic LED display, the size of a wristwatch. At CES 2007 Philips Research introduced Lumalive, a display made of tiny LEDs that are woven into the fabric of clothing.

The most remarkable development, however, is that of shape changing materials inspired by organic compounds found in plant and animal life. Materials such as shape memory alloys, which mimick the behavior of muscles, are used by artists like Meixuan Chen to create interactive knitware that is responsive to environmental stimuli. Smaller actuators mean computing devices can now be built that adjust their shape according to some computational outcome, or depending on interactions with users. This will eventually result in displays that are not just volumetric, but that flexibly alter their 3D shape.

Together, the above three developments allow for a new category of computers that feature displays of almost any form: curved, spherical, flexible, actuated or arbitrary. E-ink displays will first find widespread use in e-books, mobile appliances and advertising. With cost coming down, a logic next step would be curved or flexible displays on products like bottles, serial boxes, furniture, sportswear, and toys. How will users interact with such oddly shaped displays? What will their user interfaces look like? One thing is clear: they will look very different from the ones we use today. Rather than relying on planar GUIs, they will feature more organic user interfaces.

Defining Organic User Interfaces

“An Organic User Interface is a computer interface that uses a non-planar display as a primary means of output, as well as input. When flexible, OUIs have the ability to become the data on display through deformation, either via manipulation or actuation. Their fluid physics-based graphics are shaped through multitouch and bimanual gestures.”

OUIs aim to support a number of design goals that transcend traditional usability. Their learnability, for example, is governed by the clarity of their affordances, and by their ability to adapt these to new contexts of use. With their emphasis on flexibility and user satisfaction, OUIs inspire users to be creative rather than merely productive. OUIs also promote wellbeing through diversity of posture and ergonomic fit. Finally, although OUIs should be designed for sustainable use (see sidebar), they need not necessarily be made out of organic materials for their interface to still be considered organic. In 1989, British architect David Pearson formulated it as follows [4]:

“Let the design:

be inspired by nature and be sustainable, healthy, conserving, and diverse;

unfold, like an organism, from the seed within;

exist in the ‘continuous present’ and ‘begin again and again’;

follow the flows and be flexible and adaptable;

satisfy social, physical, and spiritual needs;

‘grow out of the site’ and be unique;

celebrate the spirit of youth, play and surprise;

express the rhythm of music and the power of dance.”

These words inspired the following three principles for OUI design:

1. Input Equals Output

In a GUI, input devices are distinct and separable from output devices: a mouse is not a display. However, this is typically not true for interactions with physical objects. Paper documents may be stacked, thrown about and folded with a kind of physical immersion that can only be dreamed of in GUI windows. This immersion can only be achieved through a complete synergy between multi-finger, two-handed manipulations on the one hand, and corresponding visual, haptic and auditory representations on the other. Displays sense their own shape as input, as well as all other forces acting upon them. In an OUI, such input is not distinguishable from its graphic output: users literally touch and deform the graphics on display. As such, OUI displays render as a real-world object, folded into the shape that best supports data interpretation. Bits and pieces of such behavior are already found in Apple’s still rigid iPhone display: its multitouch screen, e.g., senses the deceleration of the finger, which provides an impulse to the physics engine to scroll the menu as if it was flicked.

Figure 3. Exploring molecular strain in Senspectra.

2. Function Equals Form

According to Lloyd Wright’s mentor, Sullivan, the shape of a building or object should be predicated on its intended function, not its precedent: “form follows function”. Bauhaus popularized his credo by abolishing embellishments in everyday design. Lloyd Wright thought this was a mistake: according to him, form should not follow function: “they should be one, joined in a spiritual union”. This notion resonates with Gibson’s ecological approach to visual perception, embodied in the concept of affordance: the qualities of an object that allows a person to perceive what action to take. That is, the form of an object determines what we can do with it. This describes well what Organic User Interfaces excel at: the physical representation of activities. Picking up a display activates it for input. Rotating it changes view from landscape to portrait. Likewise, bending the top right corner of the display inwards may invoke a paging down action, while bending it outwards pages up. Bending both sides inwards causes content to zoom in, while bending outwards zooms out. An example of such behaviors is found in Parkes’ Senspectra, a molecular modeling toolkit (see Figure 3). When a user bends the molecular model, LEDs embedded in the nodes glow according to the amount of strain exerted upon the optical fibres that connect them.

3. Form Follows Flow

Today, more than ever, meaning is appropriated by context. Similarly, OUIs adapt their form to better suit different contexts of use. Their shape fluidly follows the flow of user activities in a manifold of physical and social contexts of reuse, as well. A simple example is found in the use of folding in clamshell cellphones. Opening the clamshell activates the phone, a very strong affordance indeed. Closing it ends a call, deactivating the keys, protecting the display and reducing its footprint, all in one go. A more profound example is found in the Readius: its display folds out when needed, thus doubling the available screen real estate when the activity such requires. Like clothing, forms should always suit the activity. Clothing fits the body while closely following its movements, and can even be deformed to serve other functions, like holding objects, if necessary. Thus, if the activity changes, so should the form. This kind of adaptation is best exemplified by actuated OUIs like Lumen (2007) [6], a display that changes shape in 3D, or by SensOrg (1996), an electronic musical instrument with a flexible arrangement of inputs that are moulded to varying physical or creative demands [10]. The Moldable Mouse in Figure 2b shows how malleability also reduces the risk of painful repetitive strain injuries. Its body – made of non-toxic polyurethane-coated modeling clay with stick-on buttons – allows input to literally follow the shape of the hands.

Organic User Interfaces: Some Early Examples

One of the first systems to exhibit OUI properties was the Illuminating Clay project (2002) [5]. Bridging the gap between TUI and OUI, it was the first interactive display made entirely out of clay. With their hands, users could deform the clay model, the topography of which was tracked by an overhead laser scanner. The scan served as input to a 3D landscape visualization, which was then projected back onto the clay surface. Illuminating Clay illustrates the blurring between input and output device: Users could, e.g., alter the flow of a river by moulding valleys into the clay. Function is also triggered by form, which literally follows the flow of interactions.

Gummi: Flexing Plastic Computers

Another early envisionment of an OUI was Gummi (2004) [8]. Gummi was a pressure-sensitive PDA that simulated a flexible credit card displaying an interactive subway map. Bending the display would cause this subwap map to zoom in or out, while a touch panel on the back would allow users to scroll. Again, function equals form: the shape of the display affords zooming, and the interplay between haptics and visuals reinforces this functionality.

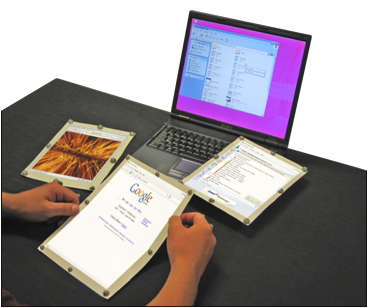

Figure 4. Some possible interactive shapes for paper computers. a) Organizing window sheets in a stack. b) Leafing through window sheets. c) Folding window sheets into three-dimensional content.

Paper Computers

Books and paper also form powerful sources of inspiration for flexible computer design. Paper is particularly versatile as a medium of information. According to Sellen and Harper [9], users still prefer paper over current computer displays because it makes navigation more flexible. Paper input is direct, two-handed, and provides a rich synergetic set of haptic and visual cues. Paper supports easier transitions between activities by allowing users to pick up and organize multiple documents two-handedly. Paper is also extremely malleable: it can be folded — a primary source of input when constructing models — or bent, most often applied in navigation. Paper can be randomly arranged, or in stacks, and can even contain other objects. With the development of flexible e-ink displays we can imagine that some day our computers will be indistinguishable from a sheet of paper. One of the questions we have been trying to answer is how will we interact with such flexible computers?

We experimented with the use of Foldable Input Devices (FIDs) for GUI manipulations by tracking the shape of several cardboard sheets that featured retroreflective markings (see Figure 4). Behaving like real paper documents, 3D graphics windows follow the shape of associated FIDs. When FIDs are stacked, so are the window sheets in the GUI (Fig. 4a). Stacks of window sheets are sorted with a shake and browsed by leafing action (Fig 4b). Using a special FID, window sheets can even be folded into 3D models, further blurring the distinction between a window sheet and its content (Fig 4c).

Figure 5. Paperwindows: A prototype paper computer.

Inspired by Wellner’s DigitalDesk (1991) [11], Paperwindows (2004, Figure 5) [2] was the first computer made entirely out of three-dimensional sheets of paper. It simulated a flexible, hires, full-color and wireless e-ink display of the future.

Paper window documents are regular sheets of paper, augmented with 8 retroreflective markers. These markers allow a Vicon to capture the motion and interactive shape of the paper, which is modeled as a NURBS surface textured with the real-time content of an application window. When projected back onto the paper, the 3D models correct any projection skew caused by paper folds, giving the illusion that the paper is, in fact, an interactive print. We experimented with a web browser of which most functions were accessible through changing the shape of the paper – the primary display of the computer. Bending the sheet around its horizontal axis would cause the web browser to page down or up. Bending the document back around its vertical axis would cause the web browser to go back or forward in its browsing history. Fingers were also tracked: a link was clicked by touching it. A paper window was activated by picking it up. Information could be copied from one document to the next by rubbing two windows onto each other. Documents could be enlarged through collation, and sorted by stacking. Such physical interaction techniques remove any distinction between input and output: in paper windows the shape, location, and orientation of the display is the primary form of input.

Figure 6. Interactive Blobjects. a) Dynacan computer with flash animation b) iPod form factor on an interactive cardboard design bench c) Zoom gesture on spherical screen with Google Earth.

From Interactive Blobjects to Curved Computer Interactions

In the Interactive Blobjects project, we are exploring opportunities afforded by marrying everyday objects with oddly shaped displays, through tracking and projection. For example, Dynacan, the dynamic pop can featured in Fig. 6a, is an early prototype of a fully recyclable curved computer. Its display features Flash animations, videos and RSS feeds. Future versions will be made of flexible full-color e-ink, powered by a processor and battery pack inside the can. Users can scroll by rotating the can, which is sensed by a set of accellerometers. Electronic components can be made detachable prior to recycling of the can. Dynacan is part of a larger workbench investigating OUI design. Figure 6b shows how any piece of cardboard, curved or a cube, can simulate a computer interface. By selecting dials, menus and interactive skins from a palette of interaction styles, shown in the background, a simple cardboard box is turned into a fully functional iPod. Press the finger on the palette and the iPod becomes a fully functional iPhone instead. More complex blobjects are also possible, like architectural cardboard models with live animated textures, or interactive spherical displays, like the one depicted in Figure 6c.

Conclusions

The above examples only scratch the surface in terms of ways in which we might interact with future computers of any shape or form. Computers with displays that are curved, flexible and that may even change their own shape in order to better fit the data, or user for that matter. In Organic User Interface design, these computers will no longer be conceived of as distinguishable from the world in which they live. All physics acting upon displays, including their shape, will be used to manipulate information. Functions will be triggered through form changes that follow the flow of the everchanging world of the user. In a world where the solitary task is an increasingly endangered species, the chief purpose of an OUI is to interweave a plurality of highly contextualized, interspersed activities across a variety of disconnected contexts. One challenge will be for it to do so in a manner that carries consistency across activities and contexts. Rather than a single OUI acting as an advanced swiss army knife, users need to utilize that OUI which comes in the form most appropriate for a particular activity. Flexibility should not be misinterpreted as “one OUI fits all”: it is exactly in celebrating the diversity of display shapes that a wealth of OUI designs will find their purpose.

In the not so distant future, curved, full-color, flexible OLEDs or e-ink displays will appear in our homes, furniture, e-books, jewellery and clothing. When tired of the color of your suit, the pattern of your wallpaper, or the interface on your cellphone, you simply download a new one from an online store. Some hardware interfaces may one day be monitized like software entirely, substituting the wasteful trend of buying new atoms with that of more eco-concious bits. That would be a final frontier in the design of computer interfaces that turn the natural world into software, and software into the natural world.

References

1. Haeckel, E. Art Forms in Nature. Munich & New York: Prestel Verlag, 1998.

2. Holman, D., Vertegaal, R. and N. Troje. PaperWindows: Interaction Techniques for Digital Paper. In Proceedings of ACM CHI 2005. Portland, Oregon, pp. 591-599.

3. Lloyd Wright, F. An Organic Architecture: The Architecture of Democracy. London: Lund Humphries, 1939.

4. Pearson, D. The Natural House Book. London: Fireside, 1989.

5. Piper, B. Ratti, C. and H. Ishii. Illuminating Clay: A 3-D Tangible Interface for Landscape Analysis. In Proceedings of ACM CHI 2002, Minneapolis, MN, 2002, pp. 355-362.

6. Poupyrev, I., Nashida, T., Okabe, M. Actuation and Tangible User Interfaces: the Vaucanson Duck, Robots, and Shape Displays. Proceedings of TEI’07. Baton Rouge, LA, 2007, pp. 205-212.

7. Rashid, K. Karim Rashid: I Want to Change the World. Universe Publishing, 2001.

8. Schwesig, C., Poupyrev, I. and E. Mori: Gummi: a bendable computer. In: Proceedings of ACM CHI 2004. Vienna, Austria, 2004, pp. 263-270.

9. Sellen, A., and Harper, R. The Myth of the Paperless Office, MIT Press, Cambridge, MA, 2003.

10. Vertegaal, R. and Ungvary, T. Tangible Bits and Malleable Atoms in the Design of a Computer Music Instrument. In Summary of ACM CHI 2001. Seattle, WA, 2001, pp. 311-312.

11. Wellner, P. Interacting with Paper on the DigitalDesk. In Communications of ACM 36(7), 1993.

Bios

David Holman is a PhD student at the Human Media Laboratory at Queen’s University in Canada.

Roel Vertegaal is an interaction designer and scientist. He is Associate Professor of Human-Computer Interaction at Queen’s University in Canada, where he directs the Human Media Lab. He is also President and CEO of Xuuk, Inc., a startup that develops and markets attention sensors, like eyebox2, for advertising metrics. Roel holds degrees in Music from Utrecht Conservatory, Computer Science as well as a PhD in Human Factors. Amongst other things, Roel co-founded the alt.chi sessions at the annual ACM CHI conference.

Filed under | Comments Off