Comments are closed.

Designing Kinetic Interactions for Organic User Interfaces

by Amanda Parkes, Ivan Poupyrev and Hiroshi Ishii

We are surrounded by a sea of motion: everything around us is in a state of continuous movement. We experience numerous and varied kinds of motions: voluntarily motions of our own body as we walk, passive motion induced by natural forces, such as the rotation of windmill blades in the wind or the fall of a leaf from a tree due to the force of gravity; physical transformations such as the growth of a flower or the inflation of balloon, and the mechanical motion of the machines and mechanisms that populate our living spaces.

It is hardly surprising then that humans have always been perplexed and fascinated by the nature of motion. In 500 BC, the Greek philosopher Parmenides declared that all motions are an illusion. Experimental and theoretical studies of motion by Galileo and Newton have laid the foundation of modern physics and modern science; and Einstein’s general theory of relativity has explained movements on a cosmic scale.

Perhaps more then trying to understand motion, however, humans have always been fascinated with producing artificial motion. While developments of machines that transform energy into mechanical motion, in particular steam engines, underpinned the industrial revolution of the late 19th century, it was the development of moving pictures that most convincingly demonstrated the power of motion as a communication medium. Since then, however, the use of motion as a communication medium has been mostly limited to the rectangular screens of movie theaters, TVs or computer displays. Several research directions have attempted to take moving images from the screen into the real world, such as Augmented Reality (AR) and ubiquitous computing. However, the underlying paradigm has hardly changed: the screen may change location, i.e. moving images can be projected on the table, but the objects they display to the user and their motion remain essentially virtual.

Recently, however, there has been a rapid growth of interest toward using real kinetic motion of physical objects as a communication medium. Although the roots of this interest stretch back as far as the 18th century to early work on automata, it was the recent emergence of new “smart” materials, tiny motors and nanomanipulators, organic actuators and fast networked embedded microprocessors that has created new and exciting opportunities for taking motion out of the screen and into the real world. Instead of simulating objects and their motion on screen, we attempt to dynamically re-shape and re-configure real physical objects and perhaps entire environments to communicate with the user.

Kinetic interaction design forms part of the larger framework of Organic User Interfaces (OUI) that is discussed in this special issue: interfaces that can have any shape or form [19]. We define Kinetic Organic Interfaces (KOIs) as organic user interfaces that employ physical kinetic motion to embody and communicate information to people. Shape-changing inherently involves some form of motion since any body transformation can be represented as motion of its parts. Thus kinetic interaction and kinetic design are key components of the OUI concept. With KOIs, the entire real world, rather then a small computer screen, becomes the design environment for future interaction designers.

There are several reasons why KOIs are exciting and different from previous interaction paradigms. Firstly, KOIs exist in the real world that surrounds us. Creating environments that can seamlessly mix computer-generated entities with the real world has been one of the most important research directions in recent history. For example, AR systems dynamically overlay real-time 3D computer graphics imagery on the real-world environment allowing user to see and interact with both physical and virtual objects in the same space [15]. Unlike in AR interfaces, however, with KOIs, all objects are real and therefore perfectly mixed with both living organisms and inanimate objects. Merging the computer interface with the real world, therefore, means it can be a significantly more intimate and organic, with the computer interface being an organic part of our environment. Secondary, unlike virtual images the real physical motion can communicate information on several perceptual levels, i.e., real physical motion can stimulate not only visual, but also aural, tactile and kinesthetic sensations in humans. This allows creating much richer and effective interactions, that have not been possible before. Finally, human beings possess a deeply rooted response to motion, recognizing innately in it a quality of ‘being alive’ provoking significantly deeper and emotional response from users.

This paper presents a framework for this emerging field of kinetic interaction design. We discuss previous work which provides the foundation of motion design in interaction as well as analyze what can be learned and applied from relevant theories and examples in robotics, kinetic art and architectural systems. We also discuss some of the current directions in kinetic interface designs and conclude by proposing principles that can be applied in the future design of such interfaces and forms. Described as “one of the 20th-century art’s great unknowns,” [2], the language of movement has been an underutilized and little examined means of communication, and we believe this paper will reveal that the use of motion in human computer interfaces is still in its infancy. This paper is a means to provide a broader perspective of the possibilities of kinetic interaction design, taking advantage of motion as a medium for creating user interactions befitting the 21st century.

Kinetic Precedents: Learning from Automata, Kinetic Art and Robots

Human beings have a rich history of designing and utilizing kinetic forms in art, automata and robotics, from which we can draw inspiration and analysis of the possibilities for kinetic interaction design. In particular, the seventeenth century marked a significant increase in the phenomena of human or animal automatons, i.e. self-moving machines. One of the most famous of these was a mechanical duck by the Frenchman Jacques de Vaucanson. The duck was described as a marvel which ‘drinks, eats, quacks, splashes about on the water, and digests his food like a living duck.’ Another similarly spectacular automaton from this period was The Writer by Swiss Pierre Jacquet-Droz: with internal clockwork mechanics, this life-size figure of a boy could write any message up to 40 letters. The interesting characteristic of these early automata was that they were not utilitarian in nature, but were constructed as highly technological decorations to be observed and enjoyed [17]. They reflected the early human fascination with simulating human characteristics in machines and in particular with our ability for self-initiated motion.

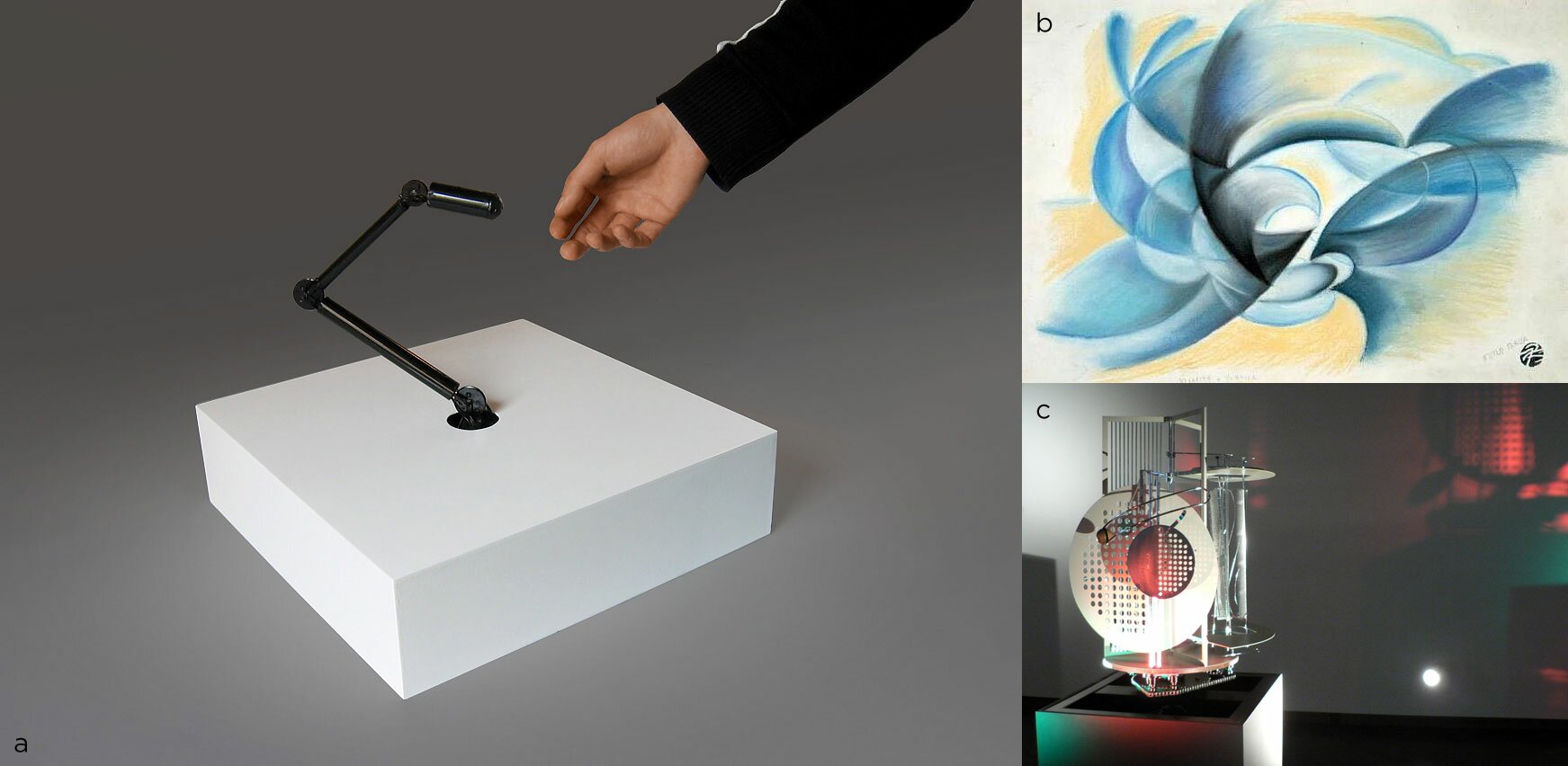

In the early 20th century Italian Futurists explored motion as a primary means of artistic expression, looking at motion as a ‘concept’. While the Futurists did not create mechanical kinetic devices, they were the first to investigate the concept of motion and speed as a plastic expressive value and the first to create an artistic vocabulary based on motion. Paintings such as of Giacomo Balla’s “Speed and rotation”, a Futurist work from 1913 (Figure 1b) represent “an expression of time and space through the abstract presentation of movement” [12].

The 1920s produced the launch of what is considered ‘kinetic art’, works which featured real physical movement in three-dimensional space. Artists such as Laszlo Moholy-Nagy, Alexander Calder, and Nicholas Takis experimented with creating sculptures whose parts were moved by air current, magnetism, electromechanical actuators or spectators themselves. The aim of these kinetic artists was to make movement a central part of the art piece, where motion itself presented artistic and aesthetic value to the viewer (Figure 1c). These early kinetic works show the strong aesthetic value of physical motion. These aesthetics are being explored today by such artists as Sachiko Kodama who creates organic kinetic sculptures based not on physical objects but on magnetically actuated fluids, her work is introduced later in this issue.

Moving into contemporary times, the field of social robotics and robotic art offers a rich motion vocabulary both in the functional and perceptual areas. While some projects, e.g. a robotic dog Aibo, have attempted to simulate animal or human forms and movements, others attempted to design an independent and unique motion vocabulary, to communicate with the user. For example, OuterSpace [9] presents a reactive robotic creature resembling an insect antenna, flexible enough to explore the environment (Figure 1a). Outerspace appears as a playful, curious creature exploring the surrounding space looking for light, motion, and contact. As Outerspace engages with an observer, its motion patterns, based on body language and human gesture, change in response to stimulus and contact, engaging the observer in a social interaction. Although abstracted, Outerspace’s organic motion repertoire allows the user to perceive a sense of intelligence in the creature, changing the nature of the interaction.

These examples from early automata to kinetic art to social robotic creatures demonstrate how reactive kinetic motion designed to be mimetic of a living organism has the power to engage us, fascinate us and create an interactive conversation with an otherwise disembodied object. It is our innate ability as human beings to be engaged by the lifelike qualities of motion, allowing us to employ the movement of objects as a tool for communication and engagement, and allowing inanimate objects to become partners in our interactions

Figure 1. (a) OuterSpace (b) Giacomo Balla’s ” Velocità e Vortice” (c) Laszlo Moholy-Nagy “Light-space Modulator” (replica at the Van Abbe Museum, Eindhoven, image courtesy HC Gilje)

Kinetic Design for Human-Computer Interaction

The examples in robotics and kinetic art have demonstrated how motion in a self-actuated entity can be used to engage and communicate. Here, we continue to explore how such motion constructs can be applied for designing user interfaces, in other words, if we imagine that the entire world around us can deform itself in response to our actions, then what kind of user interface experiences and new productivity tools could become possible?

A growing number of projects in interface design have laid the groundwork for discussion of kinetic design. Some of the important early exploration has been conducted in the field of Tangible User Interfaces (TUI) [7] and ambient user interfaces projects such as Pinwheels and Ambient Fixtures [4]. Within tangible interfaces, however, the coupling between the physical and the digital has usually been in one direction only: we can change vital information through physical handles, but the digital world has no effect on physical elements of an interface. Adding elements of kinetic design establishes bi-directional relationships in TUIs significantly expanding their design and interaction vocabulary.

The kinetic interfaces concept, however, is broader then the TUI paradigm: our inspiration partially comes from one of the earliest visions of computer-controlled kinetic environments suggested by Dr. Ivan Sutherland, a pioneer of interactive 3D computer graphics and virtual reality. In 1965 he speculated that the ideal, Ultimate Display would be “ … a room within which the computer can control the existence of matter. A chair displayed in such room would be good enough to sit in. Handcuffs displayed in such a room would be confining, and a bullet … would be fatal“ [18]. Although manipulating matter on the molecular level, which would be required for such an Ultimate Display, is currently impossible, the Ultimate Display proposes a way of thinking about KOIs, as a new category of display devices that communicate information through physical shape and motion. In a sense, every instance of kinetic design that we discuss below can be considered as an early and crude approximation of the Ultimate Display applied to a specific application.

Basic phrases of motion

In KOIs, motion can be delineated with physical components that are actuated in a way that can be detected by and respond to the user. There are millions of kinds of motion; however, most of the motions in KOIs can be represented by describing spatial motion of individual elements of the kinetic interface. These motions can be perceived not only visually, but also haptically, i.e. through physical contact, or aurally, since moving objects may produce sound. Therefore, the basic vocabulary of kinetic interface design includes speed, direction and range of the motion of interface elements, which can be either rotational of linear positional movement. The forces that moving objects may apply to the user or other objects in the environment, are another important design variable. Finally, the physical properties of interface elements, such as surface texture or surface shape can also be controlled and used for interaction. These define a very elementary vocabulary for interaction designers that can be used in creating kinetic interaction techniques.

Below, we discuss some of the approaches in designing kinetic interactions and illustrate the discussion with examples of several systems that have been developed. The overview is not meant to be an exhaustive survey of the current state in Kinetic Organic Interfaces, but rather categorize and indicate some of the directions of future development. As the field matures new concepts and applications will certainly appear.

Figure 2 (a) PICO (b) Topobo

Actuation in dynamic physical controls

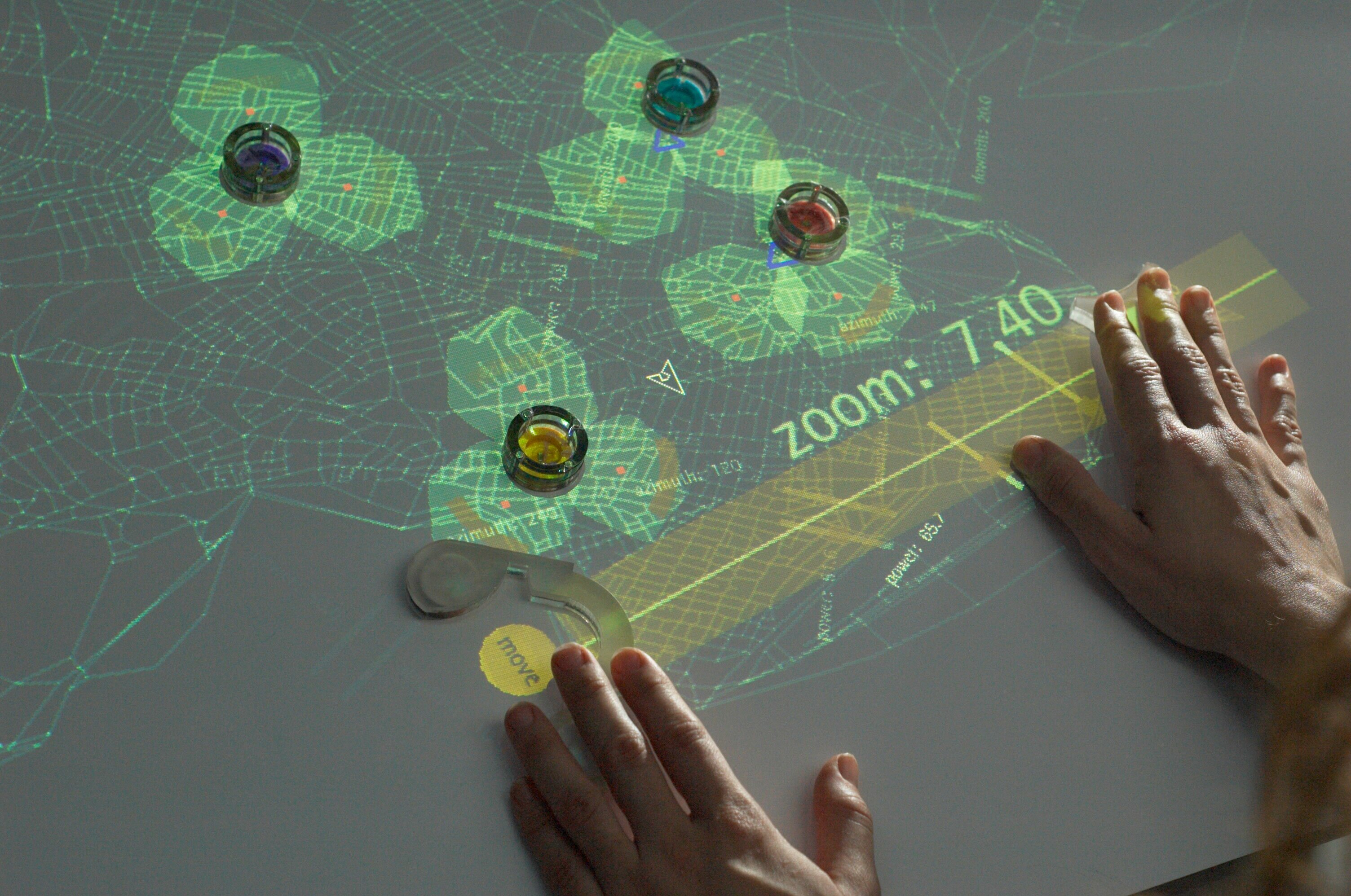

The first category of KOIs is the most straightforward application for actuation in user interfaces: dynamically reconfigurable physical controls. For example, PICO [11] and Actuated Workbench [10] use an array of electromagnets embedded in a table to physically move the input controls, i.e., pucks on a table top (Figure 2a). The pucks can also be used as input devices. The important property of such interfaces is that they allow for maintaining consistency between the state of underlying digital data and the physical state of interface controls. In one application the system was used for computing locations of cell phone towers; every time the layout of towers was recomputed, the corresponding pucks physically move to reflect the new configuration.

Kinetic physical controls provide one possible solution for an important interface design challenge: how to create interfaces that are simple, yet provide sufficient functionality to control complex problems. In the kinetic approach used in PICO, controls can be provided “on demand”, simply adding them when needed, with the system repositioning elements according to the current state of the system. Another approach is to create physical controllers on the fly by modifying the shape of the control surface: this approach is investigated in shape-shifting kinetic displays that we discuss later.

Actuation as embodiment of information

As a communication medium, motion of elements in a KOI can be used to embody representations of data or changes in data. In static form, such an interface need not contain information, it is purely its kinetic behavior that communicates with the user. This approach has been investigated in ambient displays projects – displays communicating digital information at the periphery of human perception [7]. A classical example is the Pinwheels [4] project where a stream of data, such as stock market activity monitoring, is mapped to the motion of a set of pinwheels, speeding up clockwise if the markets are increasing, for example. Pinwheels exist purely as ordinary non-computational objects; it is only their motion, such as speed and direction, which allows them to become communication devices.

Another important use of kinetic motion for information communication can be found in haptic user interfaces: devices that allow users to feel information through tactile or kinesthetic sensations. This is can be achieved by applying forces that restrict user limbs movement, i.e. in force-feedback interfaces, or by mechanically stimulating user skin in tactile user interfaces [3]. Haptic interfaces have been extensively investigated in virtual reality and telepresence applications, to allow users to feel objects properties, such as resistance, weight and surface texture. Recently, haptic interfaces have been used in desktop and mobile interfaces, allowing users, for example, to feel information on a touch screen with their fingers [13]. Although, haptic user interfaces have a long history, the research was focused mostly on the specifics of producing and understanding haptic sensations. In KOIs we take a much broader approach that looks to explore the use of kinetic motion on multiple perceptual levels, including haptics.

Actuation as embodiment of gesture

An emerging class of KOIs record motion and gestures directly from the human body and replay them creating a sense of a living organicism. For example, Topobo [16] is a 3D constructive assembly with kinetic memory, the ability to record and playback physical motion in 3D space (Figure 2b). By snapping together a combination of static and motorized components, people can quickly assemble dynamic biomorphic forms like animals and skeletons. These constructions can be animated by physically pushing, pulling, and twisting parts of the assembly; Topobo components can record and playback their individual motions, creating complex and elaborate movement in the overall structure of a creation. Importantly, the kinetic recording occurs in the same physical space as it plays back: the user “teaches” an object how to move by physically manipulating the object itself. This provides an elegant and straightforward method for motion authoring in future kinetic interactions.

Figure 3. (a) Lumen (Photograph by Makoto Fujii, courtesy AXIS magazine) (b) Source

Actuation as form generation

Perhaps one of the most inspiring categories of kinetic interfaces is that of devices and displays that can dynamically change their physical form to display data or in response to user input. Such displays have been often referred to as shape-shifting devices. One approach in designing such self-deformable displays is creating kinetic relief-like structures either on the scale of table-top device, such as in Feelex [8] and Lumen [14] (Figure 3a) or on the scale of the entire buildings, such as in Mark Goulthorpe’s Aegis Hyposurface [1]. An alternative approach is illustrated by the Source installation [6] that allows direct creation of low-resolution 3D shapes hanging in space. It consists of 729 balls suspended on metal cables forming a 9×9×9 spatial grid, where each ball is a “pixel” (Figure 3b). By moving on the cables, the balls can form letters and images floating in space.

Shape displays explore the possibilities for how physical transformability can embody the malleability so valued in the digital realm. They communicate information by manipulating 3D physical shapes in real time that can be either seen or felt by hand. The information can be communicated not only by creating a physical shape but by modifying or rearranging existing shapes, such as in case of claytronics robots, i.e. self-reconfigurable robots, under development at Carnegie Mellon University [5].

Toward a Design Language for Kinetic Organic Interfaces

The above examples of Kinetic Organic Interfaces have demonstrated a variety of methods to incorporate kinetic behavior as a valuable strategy in interface design. However, they have barely scratched the surface of the possibilities we see available in this relatively untapped arena. As designers and HCI scientists begin to explore the language of motion more fully, we discuss below some of the salient design parameters and research question to consider when utilizing kinetic motion in interaction design.

Form and Materiality

In order to recognize and comprehend motion, it must be embodied in a material form. Hence, a crucial and little understood design parameter is how properties of materials and forms affect motion perception and control. A very significant perceptual shift can occur with a change in material and forms – a jerky disjointed motion of a series of mechanical motors can be embedded in a soft padded exterior and the perceived quality of motion can be inversed to a smooth oscillation. Understanding the material affordances, their interaction with the user and other objects, environmental light and sound is crucial in designing kinetic interactions.

Kinetic Memory and Temporality

While computational control allows actuated systems to provide real-time physical feedback, it also offers the capability to record, replay and manipulate kinetic data as if it were any other kind of computational data. We refer to such data as kinetic memory, an idea introduced earlier by Topobo [16] . The concept of kinetic memory opens new and unexplored capabilities for KOIs; for example, objects can fast-forward or slow down motion sequence, move it backward or forward in time; the objects can “memorize” their shape history and share them with other objects.

Repeatability and Exactness

We can easily distinguish artificial motion because of its exact repeatability. In designing kinetic interactions, repeatable exactness is the simplest form of control state, and in many behaviors it is easily identifiable. Introducing a level of variation in kinetics interfaces or perhaps even “noise” can add a degree of an organic natural feeling, usually missing from direct digital actuator control.

Granularity and emergence

In 1772-1779, Swedish engineer Kristofer Polhem, created a series of small wooden objects describing basic mechanical elements for motion design: a Mechanical Alphabet. It consisted of 80 letters each demonstrating the simple movement that is contained in a machine, e.g. translating rotary movement into reciprocating movement. If this principle of dissecting form and mechanics into single elements, kinetic phrases, is combined with contemporary digital control structures, and new materials and actuators, it becomes possible to imagine a system where a kinetic behavior could be designed both concretely and formally. This would allow a designer to easily merge kinetic elements into user interfaces as well as everyday objects, living and working environments.

Inventing such basic “grains” of motion in kinetic interactions also brings up the issue of emergence. Emergence, defined as the process by which a set of simple rules determine complex pattern formation or behavior, creates systems which contain elements which are thoroughly comprehensible to understand individually (like ants in a an ant colony), while it is difficult to understand the overall behavior of the system functioning with decentralized control. Designing for emergence KOIs may create systems that might some day reflect some of the complexity of living organisms.

Future of Transformability

Today’s digital objects and systems are layered with functionality which present a new challenge for designers – how can forms subscribe to multiple functionality while maintaining a simplicity in user interaction which clearly describes their functionality. In current products, multifunctionality is usually maintained at the expense of ergonomics or ease of use. Kinetic programmability in interface design may offer a method in which to address this, in the form of physical transformability. A kinetic surface or skin, or a transformable internal structure can be linked to computational data sensed from the object’s use (gestural or positional controls) or the surrounding environment and the physical form of the object changes in response, making objects physically adaptable to their function or context. No longer does form follow function, form becomes function. While the current state of shape-change objects may be relegated to the science fiction of ‘Transformers’, advances in shape memory materials and nanotechnology are bringing cutting edge experiments to life.

As we move into the 21st century, it is clear that our relationship with motion needs to be reconsidered and revalued. The new class of Kinetic Organic Interfaces that is emerging is a step toward creating that change. The rapid development of new technologies, such as piezo motors and plastic actuators polymers, will potentially allow for creating efficient and inexpensive interfaces that can be used in applications for communication, information presentation, style and decoration, as well as many others. Developing such applications requires stepping outside of the boundaries of classic HCI domains and combining expertise from robotics, haptics, design and architecture. The work in Kinetic Organic Interfaces is still in its infancy, and we consider this paper is an invitation for discussion on the future of kinetic design in user interfaces and as stimulus for further research in this exciting and emerging area.

References

1. Aegis Hyposurface in http://www.sial.rmit.edu.au/Projects/Aegis_Hyposurface.php

2. Brett, G. and Cotter, S. Force Fields: Phases of the Kinetic. Barcelona, Actar, 2000.

3. Burdea, G. (1996). Force and touch feedback for virtual reality, John Wiley and Sons.

4. Dahley, A., Wisneski, C. et al. Water Lamp and Pinwheels: Ambient Projection of Digital Information into Architectural Space. Proceedings of CHI 98, ACM Press (1998).

5. Goldstein, S., Campbell, J. et al.. Programmable Matter. Proceedings of Computer, IEEE 2005 38: 99-101 .

6. Greyworld. “Source.” 2004.

7. Ishii, H. and Ullmer, B. Tangible Bits: Towards Seamless Interfaces between People, Bits and Atoms. Proceedings of CHI 1997, ACM Press, (1997).

8. Iwata, H., H. Yano, et al. Project Feelex: Adding Haptic Surfaces to Graphics. Proceedings of SIGGRAPH 2001, ACM Press, (2001).

9. Lerner, M. “Outerspace: Reactive robotic creature.” from http://www.andrestubbe.com/outerspace/, 2005.

10. Pangaro, G., D. Maynes-Aminzade, et al. The Actuated Workbench: Computer-Controlled Actuation in Tabletop Tangible Displays. UIST 2002, ACM Press (2002).

11. Patten, J. and Ishii, H. Mechanical Constraints as Computational Constrants. Proceedings of CHI 2007. ACM Press (2007).

12. Popper, F. Origins and Development of Kinetic Art. Greenwich, CT. New York Graphic Society: 1968.

13. Poupyrev, I. and Maruyama, S. Tactile interfaces for small touch screens. Proceedings of UIST 2003, ACM Press, (2003).

14. Poupyrev, I., Nashida, T., Okabe, M. Actuation and Tangible User Interfaces: the Vaucanson Duck, Robots, and Shape Displays. Proceedings of TEI’07. Baton Rouge, LA, 2007, pp. 205-212.

15. Poupyrev, I., D. Tan, et al. Tiles: A Mixed Reality Authoring Interface. Proceedings of Interact 2001.

16. Raffle, H., Parkes, A. et al. Topobo: A Constructive Assembly System with Kinetic Memory. Proceedings of CHI 04. ACM Press, (2004).

17. Riskin, J. “The defecating duck, or, the ambiguous origins of artificial life.” Critical Inquiry 2003, 20(4): 599-633.

18. Sutherland, I. The ultimate display. International Federation of Information Processing, 1965.

19. Vertegaal, R. and Poupyrev, I. Organic User Interfaces (Editorial). In Special Issue of Communications of ACM on OUI, June 2008.