Comments are closed.

Introduction

“Organic User Interfaces have non-planar displays that may actively or passively change shape via analog physical inputs”

By Roel Vertegaal and Ivan Poupyrev, Guest Editors

Throughout the history of computing, developments in human-computer interaction (HCI) have often been preceded by breakthroughs in display and input technologies. The first use of a cathode ray tube (CRT) to display computer-generated (radar) data, in the Canadian DATAR [6] and MIT’s Whirlwind projects of the early 1950s, led to the development of the trackball and light pen. That development, in turn, influenced Sutherland’s and Engelbart’s work on interactive computer graphics, the mouse, and the graphical user interface (GUI) during the early 1960s. According to Alan Kay [3], seeing the first liquid crystal display (LCD) had a similar disruptive effect on his thinking about interactivity at Xerox PARC during the early 1970s. His vision of Dynabook led to the development of Smalltalk, the Alto GUI (1973), and eventually, the Tablet PC [2]:

“… Another thing that we saw in 1968 was a tiny 1” square first flat panel display down at the University of Illinois. We realized it was going to be a matter of years until you could put all the electronics… on the back of a flat panel display, which I later came to call the Dynabook.”

Figure 1. Raedius rollable cell phone prototype by Polymer Vision [4].

Over the past few years, another quiet revolution has been brewing in some of the fundamental technologies used to create digital computing devices. While still limited in resolution, speed, color, and size, pixels made of electrophoretic ink (E-Ink) and light-emitting polymer technologies, combined with advances in organic thin-film circuit substrates, now allow for displays so thin and flexible they are beginning to resemble paper (see Figure 1). Display manufacturers are beginning to weave textile displays as well, by threading large arrays of tiny LEDs into fabrics and furniture. In parallel to these developments, advances in sensor technologies now allow for input devices to track the position of multiple fingers, twists, pressure, and acceleration on any surface. On the output side, miniature actuating devices, shape memory polymers, multi-layer piezoelectric sandwiches, and tiny ultrasound motors are beginning to allow for “Claytronic” interaction devices, with displays that actively reshape themselves [1]. All this is happening against a backdrop of microprocessors that are faster, smaller, and more energy-efficient than ever before, powered by super-thin, flexible polymer batteries that withstand tens of thousands of bends. Such phenomenal technological breakthroughs are opening up entirely new design possibilities for HCI, to an extent perhaps not seen since the days of the first GUIs. In this special section, we attempt to map out a future where these technologies are commonplace. A future led by disruptive change in the way we will use digital appliances: where the shape of the computing device itself becomes one of the key variables of interactivity. We have invited a number of top researchers in this field to share their ideas on this topic. This section covers three tightly knit themes, which define what we refer to as an Organic User Interface (OUI):

1. Input Equals Output: Where the display is the input device. The developments of flexible display technologies will result in computer displays that are curved, flexible, or any other form, printed on couches, clothing, credit cards, or paper. How will we interact with displays that come in any shape imaginable? What new interaction principles and visual designs becomepossible when curved computers are a reality? One thing is clear: current point-and-click inter- faces designed for fixed planar coordinate systems, and controlled via some separate special-purpose input device like a mouse or a joystick, will not be adequate. Rather,input in this brave new world of computing will depend on multi-touch gestures and 3D-surface deformations that are performed directly on and with the display surface itself. In future interfaces, input and output design spaces are thus merged: the input device is the output device. We have invited two authors to discuss their work on this topic. Jun Rekimoto will share his thoughts on new directions in skin-based inputs: interaction techniques that are not only multi-touch , but that potentially follow any shape or form. A sidebar by Carsten Schwesig argues the finer points of the use of analog rather than discrete inputs for optimal organic interaction design.

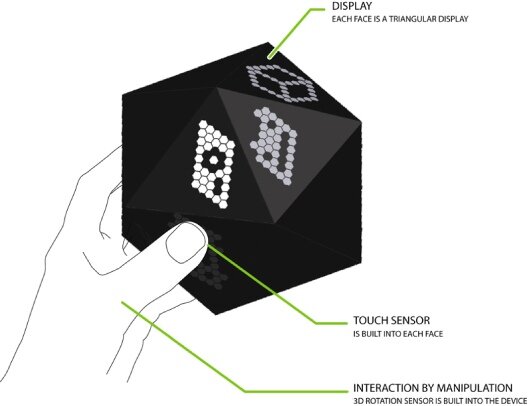

Figure 2. D20 is a concept of multifaceted handheld display device, shaped as a regular icosahedron. The user interacts with it by rotatingit and touching its faces [5]. The visual interface is structured totake advantage of the device shape.

2. Function Equals Form: Where the display can take on any shape. Today’s planar, rectangular displays,such as LCD panels, will eventually become secondary when any object, from a credit card to a building, no matter how large, complex, dynamic or flexible will be wrapped with high resolution, full-color, interactive graphics. Several pioneering projects are already exploring this future, such as the D20 concept that proposed an interface for an icosohedral display [5] (see Figure 2). One important observation that emerges from such experimentation is that the form of the display equals its function. In other words , designers should tightly coordinate the physical shape of the display with the functionality that its graphics afford. Three contributions in this section address this topic of research. David Holman and Roel Vertegaal further elaborate on design principles of OUIs, as grounded in their experimentation with flexible computers and curved-computer interactions. In accompanying sidebars, Elise Co and Nikita Pashenkov present an overview of the new flexible display technologies that underlie OUIs, while Eli Blevis ponders the implications on sustainability of the potential widespread use of these technologies in product design.

3. Form Follows Flow: Where displays can change their shape. In the foreseeable future, the physical shape of computing devices will no longer necessarily be static. On the one hand, we will be able to bend, twist, pull, and tear apart digital devices just like a piece of paper or plastic. We will be able to fold displays like origami, allowing the construction of complex 3D structures with continuous display surfaces. On the other hand, augmented with new actuating devices and materials, future computing devices will be able to actively alter their shape. Form will be able to follow the flow of user interactions when the display, or entire device, is able to dynamically reconfigure, move, or transform itself to reflect data in physical shapes. The 3D physical shape itself will be a form of display, and its kinetic motion will become an important variable in future interactions. In this section Amanda Parkes, Ivan Poupyrev, and Hiroshi Ishii examine kinetic interaction design as an area of research in OUI. In a sidebar, artist Sachiko Kodama gives her thoughts on the use of physical transformability in interactive art forms. Finally, architects Kas Oosterhuis and Nimish Biloria outline their vision of a future in which entire buildings and cities are made out of networks of actuated, interactive, organic computers.

Organic User Interfaces

These three general directions together comprise what we refer to in this section as Organic User Interfaces: User interfaces with non-planar displays that may actively or passively change shape via analog physical inputs. We chose the term “organic” not only because of the technologies that underpin some of themost important developments in this area, that is, organic electronics, but also because of the inspiration provided by millions of organic shapes that we can observe in nature, forms of amazing variety, forms that are often transformable and flexible, naturally adaptable and evolvable, while extremely resilient and reliable at the same time. We see the future of computing flourishing with thousands of shapes of computing devices that will be as scalable, flexible, and transformable as organic life itself. We should note that the OUI vision is strongly influenced by highly related areas of user interface research, most notably Ubiquitous and Context-aware Computing, Augmented Reality, Tangible User Interfaces, and Multi-touch Input. Hiroshi Ishii opens this section by exploring some of those historical trends that led to OUIs. Naturally, OUIs incorporate some of the most important concepts that have emerged in theprevious decade of HCI, in particular embodied interaction, haptic, robotic, and physical interfaces, computer vision, the merging of digital and physical environments, and others. At the same time, OUIs extend and develop those concepts by placing them in a framework where our environment is not only embedded with billions of tiny networked computers, but where that environment is the interface, physically and virtually reactive, malleable, and adaptable to the needs of the user. There has always been a mutually beneficial symbiotic relationship between advances in basic computing technologies and HCI research. New technologies inspire new interface paradigms, while new interfaces utilizing these emerging technologies encourage their continued refinement by revealing aspects most useful in their application. We hope the ideas and projects presented in this special section encourage a dialogue on organic design that inspires designers and HCI researchers to invent that future reality in which these exciting technologies will benefit people in their natural ecologies. And we hope these stories inspire physicists and engineers alike to continue inventing and refining the very basic technologies so critical to realizing the future of computing.

This diagram shows how OUI interaction styles might eventually relate to those found in traditional GUIs. In OUIs, simple pointing will be supplanted by multi-touch manipulations. Although menus will still serve a purpose, many functions may be triggered through manipulations of shape. OUIs will take the initiative in user dialogue through active shape-changing behaviors. Finally, OUIs’ superior multitasking abilities will be based on the use of multiple displays with different shapes for different purposes. These will appear in the foreground when picked up or rolled out, and they will be put away when no longer needed.

References

1. Goldstein, S., et al. Programmable matter. IEEE Computer 38 (2005); 99–101.

2. Johnson, J., et al. The Xerox Star: A retrospective. IEEE Computer 22, (1989), 11–29.

3. Kay, A. On Dynabook. www.artmuseum.net/w2vr/archives/Kay/01_Dynabook.html

4. Polymer Vision. Readius: Reading eReading Comfort in a Mobile Phone, 2007.

5. Poupyrev, I., et al. D20: Interaction with multifaceted display devices.Extended Abstracts of ACM CHI’06. (Montreal, 2006), 1241–1246.

6. Vardalas, J. From DATAR to the FP-6000 computer: Technological change in a Canadian industrial context. IEEE Annals of the History of Computing 16, 2 (1994).

Bios

Roel Vertegaal (roel[at]cs[dot]queensu[dot]ca) is an Associate Professor of Human-Computer Interaction at Queen’s University in Ontario, Canada, where he directs the Human Media Laboratory.

Ivan Poupyrev (poup[at]csl[dot]sony[dot]cp[dot]jp) is a member of the Sony Interaction Laboratory in Tokyo, Japan.

.

Filed under | Comments Off